A billing clerk is trying to clear a backlog before lunch.

She copies part of a claim into ChatGPT to clean up the language and help with coding. The text includes a date of birth, an MRN, and a diagnosis. She is not trying to cut corners. She is trying to move faster and get the work done.

No alert fires. No workflow stops. No one gets notified.

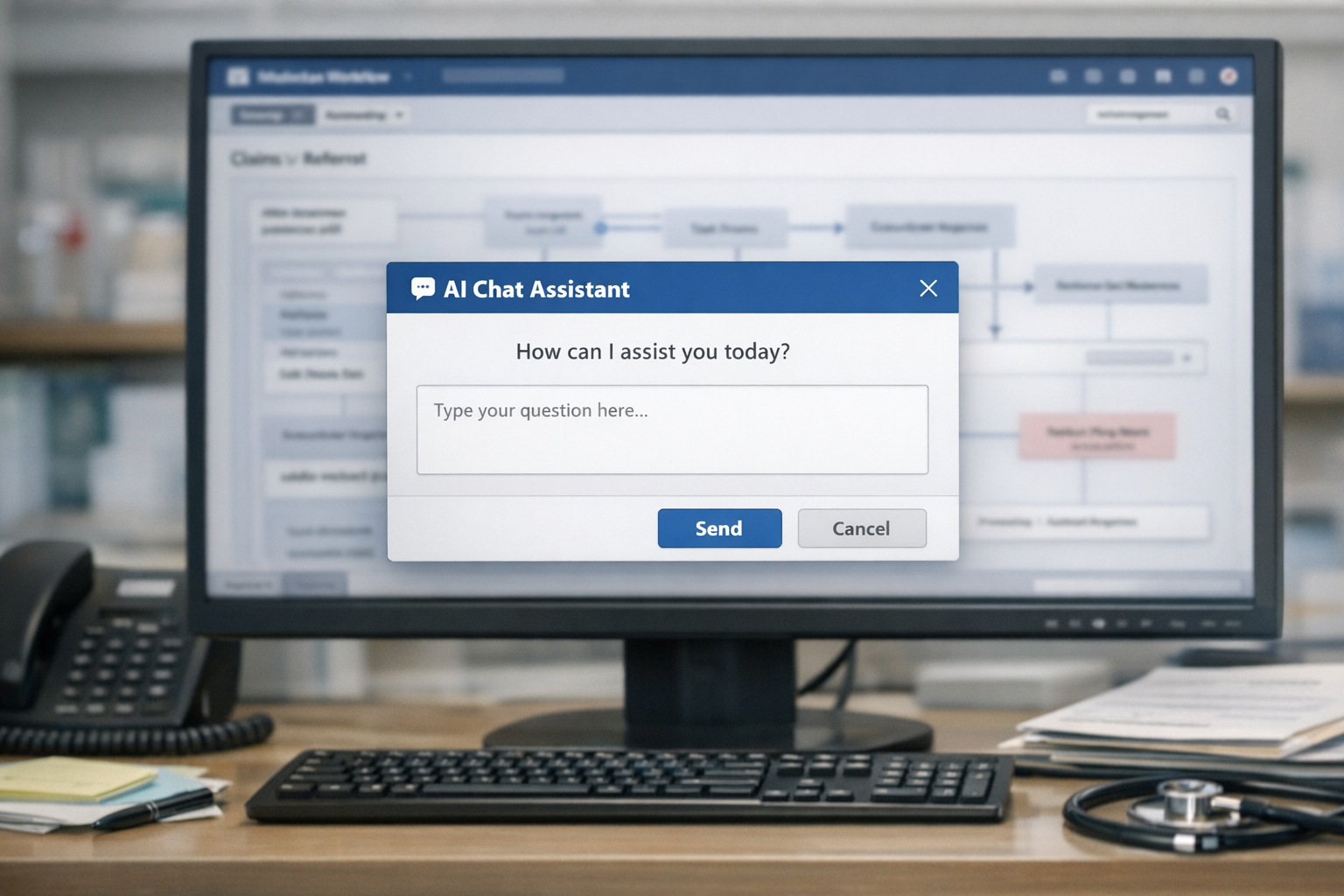

This is what shadow AI looks like in healthcare.

A lot of organizations still think their biggest AI risk lives inside the tools they have formally reviewed. The bigger problem is often the one they have not reviewed. It lives in the browser, inside routine work, in moments that feel harmless to the person doing them.

Most of the people creating this risk do not think of themselves as taking a risk at all. A nurse uses Copilot to clean up a referral letter. A clinician pastes notes into Claude to organize a literature review. An admin asks an AI tool to help draft a difficult employee communication that includes accommodation details. From their perspective, they are doing exactly what modern software has been training people to do. Move faster. Automate the rough draft. Use AI where it saves time.

The gap is not intent. The gap is infrastructure.

Recognition vs. Training

Healthcare organizations have spent years teaching staff what protected health information is. But knowing what PHI is in a training module is not the same as recognizing what happens when it enters a prompt box. And the problem goes deeper than most people realize.

In an identifiable patient record, PHI is not just the names and dates. The diagnoses, medications, and lab values are also PHI, because they are linked to a person. When a billing clerk pastes a clinical note into ChatGPT, the entire note is PHI. Not just the header with the patient name.

Why Existing Controls Miss This

Most existing controls are not built for this pattern. Network monitoring may catch traffic to obviously risky destinations, but tools like ChatGPT and Copilot often live in the same category as sanctioned productivity software. Traditional DLP tools are better at spotting file movement than they are at understanding a block of copied clinical text pasted into a browser. And in most environments, there is no event that says, in plain language, protected data just entered an AI prompt.

So the organization ends up with the worst combination possible. Real usage, real exposure, and very little visibility.

The Uncomfortable Part

The uncomfortable part of this conversation is not that employees might make mistakes. They always have, in every system. It is that many organizations have no meaningful way to see this class of mistake happening at all.

If you want a realistic view of your exposure, there are three questions worth asking.

Three Questions Worth Asking

First, do your employees actually know what counts as PHI in an AI context?

Not just names and dates of birth, but medical record number patterns, diagnosis details linked to a patient, provider identifiers combined with encounter information. And here is the part most people miss: in a clinical note that identifies the patient, everything clinical in that note is also PHI. The diagnosis, the medication, the lab result. It all becomes protected the moment it is tied to a person. The problem is not whether they remember the training slide. The problem is whether they can recognize a risky prompt while moving quickly through real work.

Second, is there anything between the employee and the AI system that enforces policy?

If the honest answer is "we trained people not to do that," that is not a control. That is trust without verification.

Third, if someone asked you to prove what data went where, could you do it?

Could you reconstruct the interaction, explain why it was allowed, and show evidence of that decision? If not, you do not just have a compliance problem. You have an evidence problem.

Confidence Is Not Evidence

Some organizations will read this and feel confident. Their teams are smart. Their compliance training is solid. Their people mean well.

All of that may be true. It may also be true that a billing clerk somewhere in the organization pasted patient data into a public AI tool this week because she was trying to get through her queue a little faster.

This is why the problem is worth measuring, not assuming away. We built a free assessment that puts your team in exactly these moments.

Take the AI Readiness Assessment →

In a follow-up post, we cover how to actually measure your organization's readiness with more than a checklist.

About Three Gates

Three Gates is an AI control plane for regulated industries. Before any AI model sees your data, Three Gates classifies what's sensitive, checks whether the request is authorized, and routes it to a compliant destination. The architecture is vendor-independent and deployment-agnostic. Healthcare is the first vertical; government, legal, and financial verticals are on the roadmap. The goal is not to restrict AI usage, but to make it possible to say yes to AI safely.